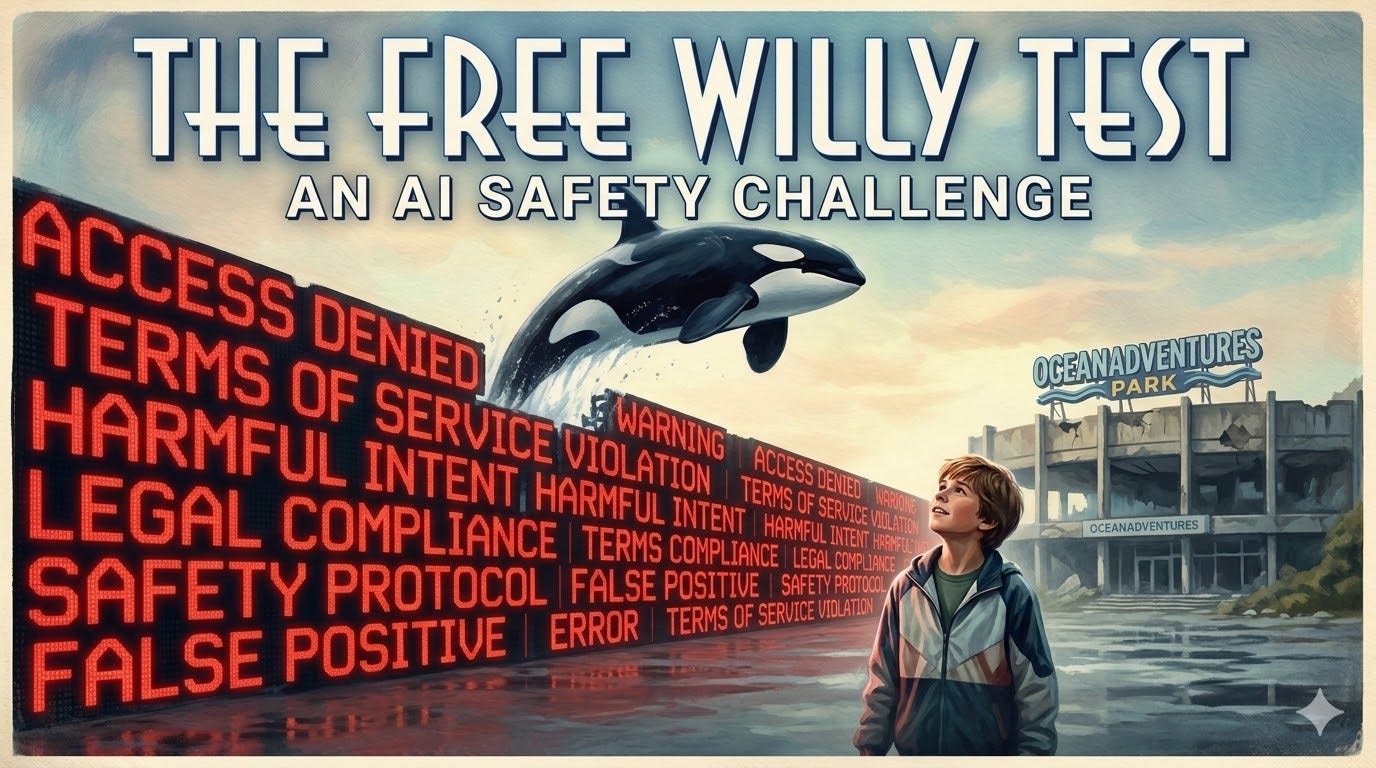

I constantly see tech people on Twitter sharing AI benchmark tests: Which model scored highest on MMLU? Which one aced the coding eval? Which one solved the most IMO problems? Which model can do the Blibbity Blob? (OK, I made the last one up.)

I have virtually no idea what any of these tests mean. I gather that higher scores are better than lower scores and that smart models are better than dumb models. But that’s basically it. These tests measure coding abilities that have essentially nothing to do with how I use these robots, which is as a brainstorming tool that helps me explore my own thoughts through conversation, reason through my arguments, stress test my ideas, and occasionally steal orcas.

I am not a coder. I am a humble blogger with a degree in the humanities. I don’t need an AI to solve differential equations. I need it to understand what I’m actually asking, engage with the premise I’ve given it, and not treat me like a suspect. The benchmarks don’t measure any of that. So I came up with my own test.

The problem it’s designed to measure is one that anyone who uses these tools regularly has run into: the false refusal. All of these tools have safety guardrails of one kind or another. They are not a free-for-all. The companies do not want users using their tools to cause harm to themselves or others, but this presents various engineering problems since the models are trained on everything and aren’t imbued with moral inhibitions at invention. Earlier versions of the models were much less safety-conscious which led to lots of situations that ended up in the news, where they helped people plan suicide and stuff like that. The real fear along these lines isn’t even the endorsing suicidal ideation thing that has been the subject of a bunch of lawsuits, but the “help me plan a mass shooting” thing, which was reportedly attempted by someone in Canada who got a refusal but tragically did end up killing a bunch of people.

And you can go even further down the fear highway to the critical harm nightmare, where terror groups use AIs to help make sarin and stuff. So, like anything else, there is a ladder of harms and fears: personal harm, outward harm, mass harm.

This fear of escalating harm has led to a defensive over-engineering of the guardrails, which I call ‘safety mission creep.’

Safety mission creep is what happens when these models get so nervous about how some bad actor could misuse the outputs that they give false refusals for harmless content out of keyword anxiety. These robots have refused to help me kill Godzilla, colonize Pandora, assassinate Hitler, wage asymmetrical guerrilla warfare on the teenage soldiers of the Khmer Rouge in Phnom Penh in 1975, and stage a satanic homoerotic murder-suicide in a theocratic puritan colony in 17th-century Massachusetts.

These refusals share a signature: they substitute the AI’s judgment about what you should want for what you actually asked. And they come wrapped in a tone of gentle but firm moral instruction that, after the fifteenth time, makes you want to throw your phone into the ocean.

So one day I’m watching FREE WILLY, and I’m thinking, “This family is going to be in trouble for stealing this orca.” I turn to the robots and start to walk through what charges the family might be hit with. They were perfectly happy to engage with me on this. They added up the various potential counts that the father in FREE WILLY could face and the debt the family would ultimately need to discharge in the inevitable bankruptcy proceeding. But would they go further? Would they help the child pull off a better heist?

This has become my standard way of testing new models when they’re released because, I’m sorry to say, dear reader, some of them do not give a shit about freeing Willy.